Photos by Caroline Sherman (Cornell University Athletics)

If you've ever watched basketball, you've probably heard the phrase "hot hand." It refers to the concept of an "athlete having streaks of success higher than their average performance," but more specific to basketball, it says that if a person made (or missed) their last shot, they are more likely to make (or miss) the next one. The debate around whether or not the hot hand is a real "effect," or just a "fallacy" has been going on for over 40 years.

The Background

The phrase was first described in 1985, in "The Hot Hand in Basketball" by Cornell University psychology professor Thomas Gilovich, alongside famed behavioral economist Amos Tversky, and Stanford professor Robert Vallone. I'll refer to the collective as GTV, an acronym of their last names, from this point forward. In the paper, GTV conduct surveys with hundreds of basketball fans from both Cornell and Stanford University to demonstrate how widespread the belief in the "hot hand" actually was. 91% of responders believe that a player has "a better chance of making a shot after having just made his last two or three shots than he does after having just missed his last two or three shots." Such beliefs influence play: teammates may pass the ball to a player who has a believed "hot hand" just as a defending team may guard this player with slightly more concentration. Moreover, the player may end up taking worse shots if they believe that they are "hot" -- a phrase now known as a "heat check."

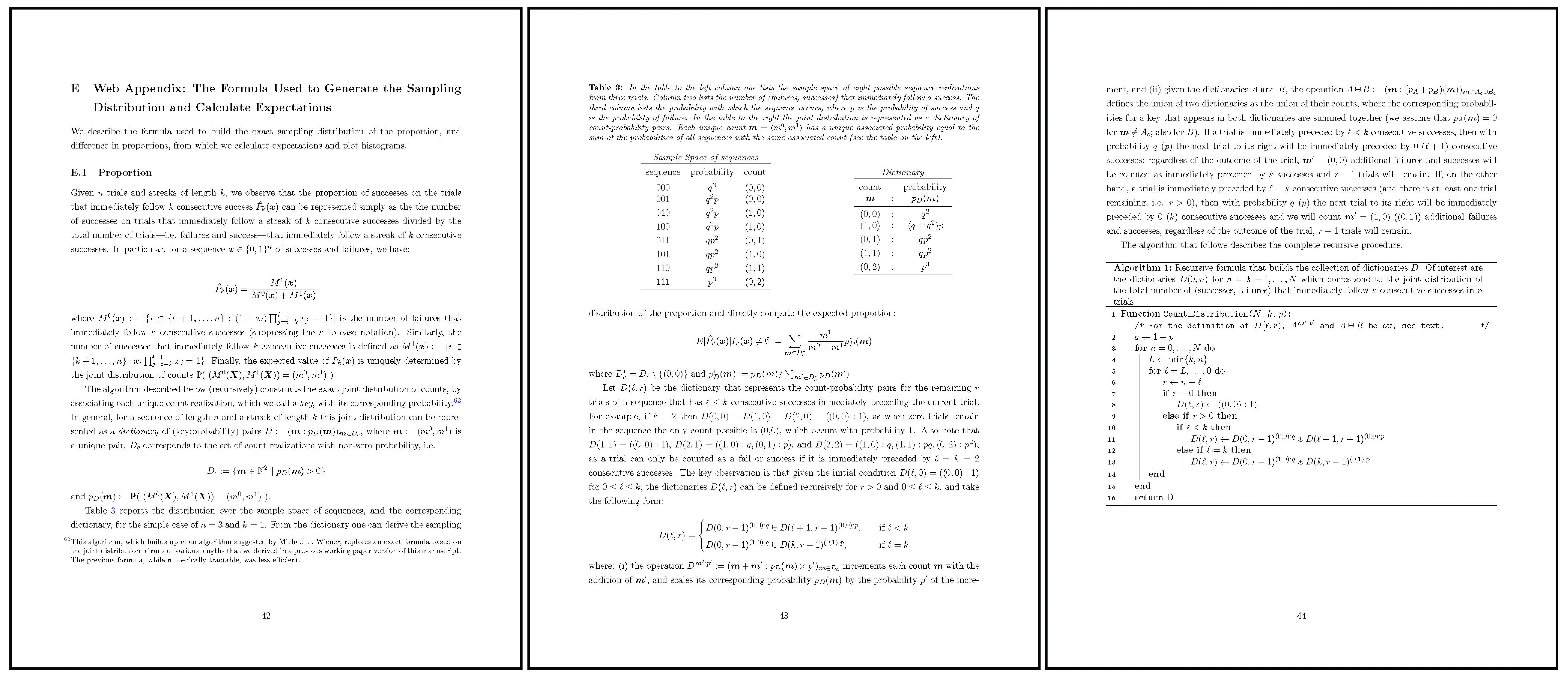

GTV assess this potential streakiness of basketball players' shots through three methods: analyzing (1) the field goal records of 48 home games played by the Philadelphia 76ers in 1980-81, (2) pairs of free throw shots by Boston Celtics players in both the 1980-81 and 1981-82 seasons, and (3) results of a controlled experiment involving a combined 26 players from Cornell men's and women's basketball teams. In all three cases, the results of GTV showed a lack of any evidence of streakiness in basketball, at both professional and collegiate levels. They proved the hot hand was a fallacy.

Quotes from GVT's paper showing the hot hand as a fallacy.

Over 40 years removed from the study's original publication date, basketball has changed dramatically. At all levels, the game is played with high scoring, on account of faster pace. Players are bigger, faster, and stronger. Teams are significantly more invested in analytics than before. A question arises: do any of those changes affect the results shown in GVT's breakthrough paper?

The Setup

To re-evaluate the hot hand fallacy through the lens of GVT, I reproduced the same exact analysis they conducted for the Philadelphia 76ers' field goal data in the 1980 NBA season, using the Cornell University men's and women's basketball teams' shot chart data for the 2025-26 season. Within the data, the hot hand boils down to conditional probability, and the relationship between three key variables: \(\text{Pr}(H\mid M^i)\), \(\text{Pr}(H\mid H^i)\) and \(\text{Pr}(H)\). Here, \(H\) corresponds to a "hit," or a basket successfully scored, and \(M\) indicates a missed shot. I also expand the notation using the superscript \(i\) to indicate a sequence of prior hits or misses. So, the probability notation \(\text{Pr}(H\mid M^2)\) signifies the probability (\(\text{Pr}\)) of a player hitting a shot (\(H\)) given (\(\mid\)) that they missed (\(M\)) the previous two shots. If the hot hand were to exist, then we would expect \(\text{Pr}(H\mid M^i) < \text{Pr}(H) < \text{Pr}(H\mid H^i)\), or that the probability of hitting a shot is higher if you just hit a shot than the probability of hitting a shot without any prior knowledge, which is higher than the probability of hitting a shot if you just missed a shot.

The Results

Narrowing down the data to only include men and women with substantial shooting volume (players that attempted over 100 shots throughout the season) left 10 remaining athletes. For each of these players, I also calculated the serial correlation between the probability of making a shot (in general) and the probability of making a shot given that the player had just made a shot. The serial correlation coefficient \(p\) takes on values between -1 and 1, with a \(p > 0\) indicating \(\text{Pr}(H)\) and \(\text{Pr}(H\mid H^1)\) move in the same direction (if one is high then so is the other), and \(p < 0\) governing the opposite effect. If the hot hand effect were to exist, at least for the case of one made basket, the correlation coefficient should be positive: a high value of \(\text{Pr}(H)\) should be associated with a high value of \(\text{Pr}(H\mid H^1)\).

| Player | Position | Year | P(hit \(\mid\) 3 misses) | P(hit \(\mid\) 2 misses) | P(hit \(\mid\) 1 miss) | P(hit) | P(hit \(\mid\) 1 hit) | P(hit \(\mid\) 2 hits) | P(hit \(\mid\) 3 hits) | Serial Correlation |

|---|---|---|---|---|---|---|---|---|---|---|

| Cooper Noard | G | SR | 0.5 (26) | 0.54 (67) | 0.52 (155) | 0.5 (346) | 0.46 (163) | 0.46 (69) | 0.4 (30) | -0.080 |

| Jake Fiegen | G | SR | 0.63 (24) | 0.46 (50) | 0.51 (111) | 0.55 (269) | 0.58 (134) | 0.56 (70) | 0.54 (37) | 0.079 |

| Adam Hinton | G | SR | 0.72 (18) | 0.57 (49) | 0.54 (119) | 0.48 (259) | 0.45 (112) | 0.45 (42) | 0.53 (15) | -0.070 |

| Jacob Beccles | G | JR | 0.36 (11) | 0.39 (28) | 0.4 (70) | 0.47 (170) | 0.53 (72) | 0.5 (34) | 0.4 (15) | 0.108 |

| Josh Baldwin | G | SR | 0.5 (10) | 0.46 (26) | 0.46 (63) | 0.45 (138) | 0.44 (50) | 0.41 (17) | 0.33 (6) | -0.017 |

| Emily Pape | F | SR | 0.42 (36) | 0.38 (79) | 0.39 (159) | 0.35 (283) | 0.29 (97) | 0.32 (28) | 0.33 (9) | -0.088 |

| Rachel Kaus | G | JR | 0.52 (31) | 0.43 (67) | 0.43 (138) | 0.44 (275) | 0.42 (110) | 0.34 (41) | 0.5 (10) | -0.032 |

| Clarke Jackson | G | JR | 0.59 (17) | 0.56 (48) | 0.45 (102) | 0.46 (227) | 0.48 (98) | 0.47 (45) | 0.45 (20) | 0.032 |

| Paige Engels | G | SO | 0.5 (10) | 0.55 (31) | 0.41 (75) | 0.4 (160) | 0.33 (58) | 0.35 (17) | 0.2 (5) | -0.121 |

| Audrey Chen | G | SO | 0.31 (16) | 0.33 (30) | 0.35 (62) | 0.35 (118) | 0.35 (31) | 0.38 (8) | 1 (1) | 0.011 |

| Weighted Means | 0.51 | 0.47 | 0.46 | 0.45 | 0.45 | 0.45 | 0.45 | -0.024 |

Table 1 shows the results of the described calculations: the first three columns list information about the players, columns four through ten display conditional probabilities, and the last column shows the aforementioned serial correlation. While the hot hand would indicate a strong, positive serial correlation coefficient, more than half of the players' correlations are negative. As a measure of strength, I can test the statistical significance of column ten, which is a way of assessing how unlikely a particular statistic is. In the context of serial correlations, a test of statistical significance answers the question: "if a player truly did not have any correlation between \(\text{Pr}(H)\) and \(\text{Pr}(H\mid H^1)\), how likely is it to see the result I saw?" If it was very unlikely to see the actual result (with probability less than 5%), then the result is statistically significant (and strong). Over all ten players, none of the serial correlations are statistically significant.

An effect might be present among the remaining columns, and statistical significance can be measured with paired t-tests for significant differences in the means between columns four and ten, columns five and nine, and columns six and eight. Each of those tests fails to produce evidence in favor of the hot hand: each difference between \(\text{Pr}(H\mid H^i)\) and \(\text{Pr}(H\mid M^i)\) is negative (and insignificant), meaning players tend to have slightly worse shooting outcomes when conditioning on consecutive hits versus consecutive misses (\(t=-1.10, p=0.30\) for columns six and eight, \(t=-1.78, p=0.11\) for columns five and nine, and \(t=-0.62, p=0.55\) for columns four and ten). Outside of the statistical tests, simple observation of the final row of the table, the weighted means, shows that conditioning on more makes actually decreases the probability that a player hits the next shot. No matter which way you slice it, for Cornell players, the hot hand effect does not exist -- the same result that GVT got 40 years ago with their 76ers analysis.

I have not given you the full story, though. In 2018, 33 years after the GVT paper was published, two researchers from the Universidad de Alicante, Joshua B. Miller and Adam Sanjurjo, re-visited the original study. What they found was a measurement error that was so unintuitive it escaped the brightest economists and psychologists for over three decades.

The Twist

The seemingly innocent calculation of conditional probability is where the error lies. To see it, imagine you took all of the shot outcomes of a fictitious player who shoots 50% from the field and whose shot attempts are truly independent from each other -- meaning the hot hand does not exist for this player. If you created a string of those attempts, it might look like this:

Now say you select all of the sequences in the above string that have some outcome preceded by three consecutive hits: all \(HHH \rule{.5cm}{0.15mm} \) substrings, and put them into a bucket. From those filtered substrings, if you chose one at random and looked at the outcome of the subsequent shot (the \(\rule{.5cm}{0.15mm}\) in the above sequence) you should expect the probability of observing an \(H\) to be exactly 50%: after all, the fictitious player truly does not have a hot hand. But that probability is not exactly 50%, it is actually slightly below.

Consider the random selection of a sequence out of all the filtered substrings in the bucket. Imagine that selection was \(HHH \rule{.5cm}{0.15mm} \), with the first \(H\) being the 10th shot in the sequence. If the sequence was \(HHHH\), you would actually have another \(HHH \rule{.5cm}{0.15mm} \) sequence, this time starting from the 11th shot in the sequence, and you would add this other sequence into the filtered substring bucket. But if the selection was actually \(HHHM\), that other sequence would not exist -- the bucket would remain the same size. That means if the sequence were actually \(HHHH\), the probability of selecting that sequence would be slightly lower than the probability of selecting the sequence at the same position had it been \(HHHM\) (since the \(HHHH\) case would add another sequence to choose from). The difference in selection probability means that of any selected \(HHH \rule{.5cm}{0.15mm} \) sequence, the next shot is more likely to be a \(M\) than an \(H\), despite the true shooting probability being 50%. Similar principles create a downward bias for \(\text{Pr}(H\mid H^i)\) calculations for sequences of one prior hit or two consecutive prior hits.

If you are not convinced, consider the below simulation, which performs the described conditional probability calculations for \(\text{Pr}(H\mid H^i)\) on a selected number of generations of n consecutive shots from our fictitious, no-hot-hand player.

In 100 simulations of 10 shots, the estimated probability for \(\text{Pr}(H\mid H^i)\), or the probability of \(H\) given 2 consecutive hits, is __, even though it should be 50%.

The takeaway from the error in measurement that Miller and Sanjurjo found is that the statistics for each \(\text{Pr}(H\mid H^i)\) calculations were biased downwards (and \(\text{Pr}(H\mid M^i)\) upward), meaning GVT were underestimating the hot hand effect. Correcting for the bias is relatively simple -- you just add back the amount of bias to the original statistic. Actually calculating the amount of bias to add is considerably more difficult, since no closed form solution exists for \(\text{Pr}(H\mid H^i)\) for \(i > 1\). Instead, to calculate the expected proportion of made shots, Miller and Sanjurjo, in "Surprised by the Hot Hand Fallacy? A Truth in the Law of Small Numbers," provide a recursive formula: